What is SLAM?

SLAM is an acronym standing for Simultaneous Localisation And Mapping. It is a computational process that continually performs two functions at the same time:

- 🧭 Localisation: working out where an agent is within its environment.

- 🗺️ Mapping: building up a spatial model of the environment as the agent explores it.

These functions are related to one another – having a better map helps you to be more sure of where you might be, and knowing where you are helps you to lay out a more accurate map based on what you have seen.

If you have good prior knowledge of your location (eg. via GPS) or your environment (eg. via an existing, accurate map), the alternate task becomes much simpler as it is able to work against a solid reference. SLAM applies to situations where neither the location nor the environment is known in advance, and at its core works to manage the inherent uncertainty of this situation to come up with an optimal solution.

What is the Business Case for SLAM?

The fundamental value of SLAM to a potential customer is to be able to locate one or more resources within an operating environment. For example, tracking forklifts within a warehouse allows the warehouse manager knows how many forklifts are in use, and where they are. The warehouse manager can make quicker and better decisions using this information. This is sometimes known as human-in-the-loop, because SLAM is being used to report information to a human.

SLAM can also be used to feed into a system which then controls a piece of equipment. For example, a SLAM-enabled automatic lawnmower would gain knowledge of the unique layout of the garden in which it’s operating, and could use location information to make sure that it covers all the grass that it needs to cut. This is sometimes known as SLAM-in-the-loop, as the SLAM system is being used to decide how the lawnmower should move. For this reason, SLAM-in-the-loop usually has tighter accuracy requirements for both the localisation and mapping steps.

Advantage and Disadvantages

The advantages of SLAM over other methods of localisation and mapping include:

- Smaller cost to scale: one piece of SLAM apparatus (a computing module and one or two cameras) is required per object to track. This is usually cheaper to scale up than other methods, which require specialised and expensive infrastructure equipment. Other methods also typically require scaling their tracking infrastructure to cover new environments; in contrast, SLAM equipment can easily be expanded out into new environments because it can automatically learn their topology.

- Less infrastructure required: one piece of SLAM apparatus requires a small amount of power, an adequate wireless network connection, and ideally a small number of fiducial markers in the environment, in order to operate. Other technologies like ultra-wideband tracking require specialised equipment to be installed and set up anywhere that tracking will take place.

- Self-learning: SLAM equipment can automatically learn the shape of its environment, making it clearer for humans to understand the reported locations in the context of their surroundings.

Some disadvantages of SLAM include:

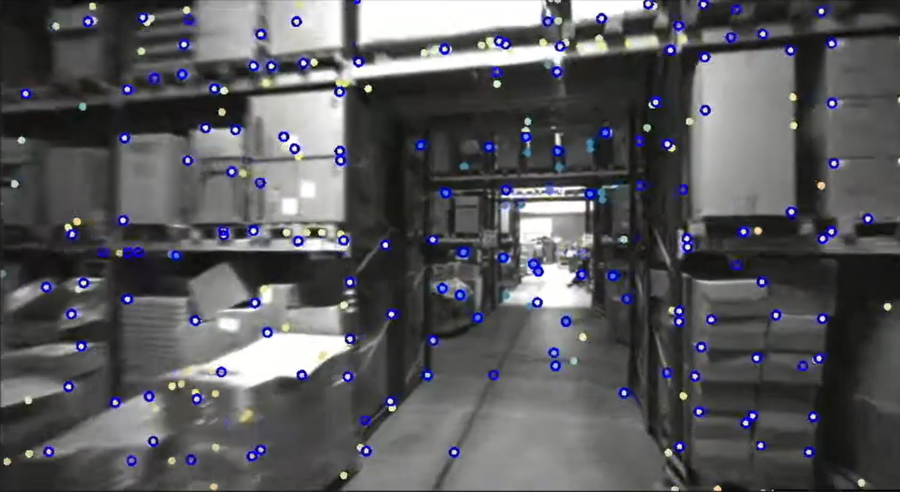

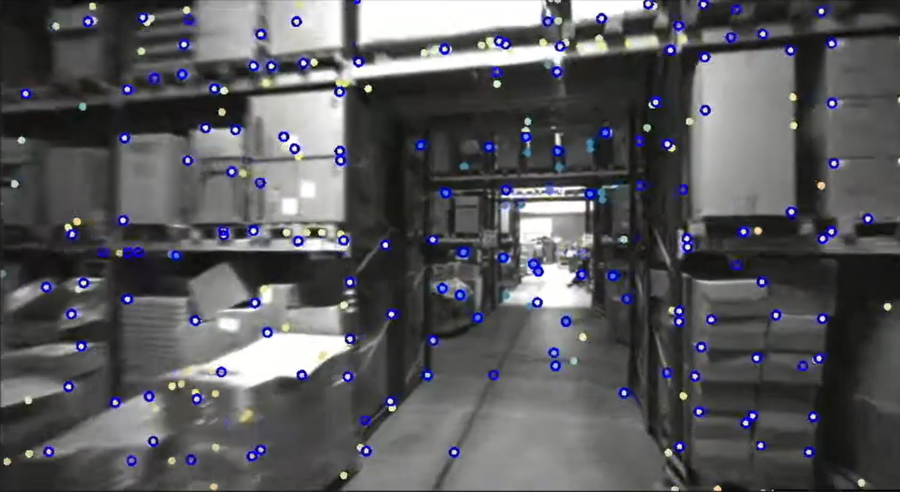

- Sensitivity to lighting conditions: as SLAM is primarily a visual tracking system, its success depends heavily on the quality of visual input. Environments that are too bright or dark (making it difficult to discern visual features), or too reflective (where glare obscures key environmental details) can degrade the quality of the results.

- Sensitivity to moving objects: SLAM operates largely under a “static world” assumption, which is to say that the features it identifies in its environment remain static. Moving objects within the environment can break this assumption and produce errors in localisation and mapping, although techniques can be employed to mitigate against dynamic objects.

- Sensitivity to the visual nature of the environment: environments with few distinctive features (eg. many blank walls, or many identical, repetitive structures) provide few landmarks for a SLAM system to make use of. This can make it more difficult for the system to interpret its surroundings, or how far it has travelled.

How SLAM Works

In General

The SLAM problem (which can be expressed mathematically, if you’re that way inclined) is to compute an estimate of an agent’s state (eg. position and orientation), and a map of its environment, given:

- Some observations of the agent’s environment;

- Some information about the agent’s recent motion, and;

- The agent’s prior state before moving.

This computation is run in a loop while the agent is active. Each time the loop is iterated, data about the agent’s location and the environmental map is computed based on the previous state, and on new sensor readings that have been received since the last iteration.

Different methods may be used to estimate the agent’s recent motion. The formal mathematical model of SLAM uses the concept of discrete “control commands” used to drive a robot, but in practice these commands may not be easily available, or (in the case of a human-driven forklift, for example) may not apply at all. Odometry based on a camera video stream, or other physical sensors, is typically used instead.

Because the estimate of motion contains inherent uncertainty, the location of the SLAM agent can become more inaccurate over time as errors accumulate. This is referred to as drift, and can be corrected to some extent by monitoring for locations that the SLAM agent knows it has already visited. If the agent returns to a position it has been before, this information can be used to “anchor” the agent better in its environment. This process is referred to as a loop closure, since the agent’s overall trajectory has formed a loop.

At Slamcore

The Slamcore implementation of SLAM is divided into three broad subsystems:

- Frontend: Takes in measurements from sensors.

- Local backend: Performs odometry and calculates the agent’s current pose.

- Global backend: Performs heavier processing to look for loop closures and correct drift.

At a high level, the SLAM algorithm at Slamcore is comprised of the following stages. These stages together represent a frame, and frames are continuously processed until the software is terminated.

- Preprocess received images

- Detect fiducials and other landmarks

- Add new pose to backend

- Perform pose graph optimisation

- Detect potential loop closures and schedule processing of them